|

[GlT] MIT가 로봇의 새로운 공간지각 능력 모델 개발 | |||

| 컴퓨팅 초기부터 사람들은 “부엌에 가서 커피 잔 가져와”와 같은, 오늘날... |

|

|  |

어떤 신기술이 세상을 극적으로 변화시킬까? 세계 최고의 연구소에서 나오는 놀라운 혁신을 독점 소개합니다.

컴퓨팅 초기부터 사람들은 “부엌에 가서 커피 잔 가져와”와 같은, 오늘날 아마존이 제공하는 알렉사(Alexa) 음성비서 수준의 고도의 명령을 따르는 로봇 하인을 상상해 왔다. 그러나 MIT 공학자들이 설명한 바와 같이, 이러한 높은 수준의 작업 수행은 로봇이 인간처럼 물리적 환경을 인식할 수 있어야 한다는 것을 의미한다.

실제 세상에서 어떤 일을 하려면, 주변 환경에 대한 멘털(mental) 모델이 있어야 한다. 이것은 인간에게는 쉬운 일이다. 하지만 로봇의 경우 카메라를 통해 보는 픽셀 값을 세상에 대한 이해로 변환해야하는 매우 어려운 문제이기도 하다.

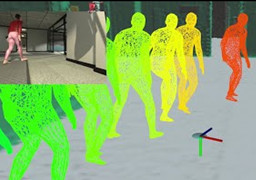

다행히도, 앞서 언급한 MIT 공학자들이 인간이 세상을 인식하고 탐색하는 방식을 모델로 한 로봇에 대한 공간 인식 표현을 개발해냈다. 3D 동적 장면 그래프(3D Dynamic Scene Graphs)로 불리는 이 새로운 모델을 사용하면 로봇이 ‘사물’, ‘사람, 방, 벽, 테이블, 의자’와 같은 시맨틱 레이블(semantic labels), 그리고 로봇이 그들 환경에서 볼 수 있을 것 같은 기타 구조들이 포함되는 주변 환경 3D 지도를 신속하게 생성할 수 있도록 해준다. 또한 이 모델을 통해 로봇은 3D 지도에서 관련 정보를 추출하고 경로에서 물체, 방 또는 움직이는 사람의 위치를 쿼리할 수 있다.

이렇게 환경이 압축되는 것은 로봇에게 유용한데, 신속하게 결정을 내리고 경로를 계획할 수 있도록 해주기 때문이다. 더불어 이것은 우리 인간이 하는 일과 그리 다르지 않은 것이다. 집에서 직장까지의 경로를 생각할 때, 고려할 필요가 있는 모든 요소를 생각하진 않을 것이다. 우리는 각 거리와 랜드마크 정도의 수준에서만 생각하고, 그것이 더 빠른 경로를 계획하는 것을 돕는다.

가사 도우미 이상으로, 연구원들은 이러한 새로운 종류의 환경 멘털 모델을 채택한 로봇이 공장에서 사람들과 나란히 작업하거나 재난 현장에서 생존자를 찾는 것과 같은 다른 높은 수준의 작업에도 적합 할 수 있다고 말한다.

이 연구는 최근 “2020 Robotics : Science and Systems 가상 컨퍼런스”에서 발표되었다.

이 연구가 왜 중요할까? 지금까지 로봇 비전과 내비게이션은 주로 두 가지 경로를 따라 발전해왔다. 첫 번째는 로봇이 실시간으로 탐색하면서 환경을 3차원으로 재구성할 수 있도록 하는 3D 매핑이다. 두 번째는 시맨틱 분할을 활용하는 것이었는데, 이것은 로봇이 자동차 Vs 자전거와 같은 시맨틱 객체로서 환경의 특징들을 분류하는 데 도움을 준다. 다만 시맨틱 분할은 지금까지는 대부분 2D 이미지를 통해 수행되었다. 그러나 MIT가 개발한 이 새로운 공간 지각 모델은 실시간으로 환경 3D 지도를 생성하는 동시에 해당 3D 지도 내에서 물체, 사람 및 구조에 레이블을 지정하는 최초의 모델이다.

- References

To view or purchase this article, please visit:

https://www.researchgate.net/publication/342881852_3D_Dynamic_Scene_Graphs_Actionable_Spatial_Perception_with_Places_Objects_and_Humans

To view a related video media, from Massachusetts Institute of Technology, please visit:

https://www.youtube.com/watch?v=SWbofjhyPzI

|  |

|

Since the earliest days of computing, people have imagined robotic servants able to follow high-level, Alexa-type commands, such as “Go to the kitchen and fetch me a coffee cup.” But as MIT engineers explain carrying out such high-level tasks means that robots will have to be able to perceive their physical environment as humans do.

In order to function in the world, you need to have a mental model of the environment around you. This is something that’s effortless for humans. But for robots, it’s a painfully hard problem, which requires transforming pixel values that they see through a camera, into an understanding of the world.

Fortunately, these MIT engineers have developed a representation of spatial perception for robots that is modeled after the way humans perceive and navigate the world. The new model, called 3D Dynamic Scene Graphs, enables a robot to quickly generate a 3D map of its surroundings that also includes objects and their semantic labels such as people, rooms, walls, tables, chairs, and other structures that the robot is likely to see in its environment. The model also allows the robot to extract relevant information from the 3D map and to query the location of objects, rooms, or the moving people in its path.

This compressed representation of the environment is useful because it allows a robot to quickly make decisions and plan its path. This is not too far from what we do as humans. If you need to plan a path from your home to work, you don’t plan every single position you need to take. You just think at the level of streets and landmarks, which helps you plan your route faster.

Beyond domestic helpers, the researchers say robots that adopt this new kind of mental model of the environment may also be suited for other high-level jobs, such as working side-by-side with people on a factory floor or exploring a disaster site for survivors.

The research presented recently at the 2020 Robotics: Science and Systems virtual conference.

Why is this important? Until now, robotic vision and navigation have advanced mainly along two routes: the first involves 3D mapping that enables robots to reconstruct their environment in three dimensions as they explore in real-time; and the second uses semantic segmentation, which helps a robot classify features in its environment as semantic objects, such as a car versus a bicycle, which so far is mostly done with 2D images. The new MIT model of spatial perception is the first to generate a 3D map of the environment in real-time, while also labeling objects, people, and structures within that 3D map.

The key component of the team’s new model is Kimera, an open-source library that the team previously developed to simultaneously construct a 3D geometric model of an environment, while encoding the likelihood that an object is, say, a chair versus a desk. Like the mythical creature that is a mix of different animals, the team wanted Kimera to be a mix of mapping and semantic understanding in 3D.

Kimera works by taking in streams of images from a robot’s camera, as well as inertial measurements from onboard sensors, to estimate the trajectory of the robot or camera and to reconstruct the scene as a 3D mesh, all in real-time.

To generate a semantic 3D mesh, Kimera uses an existing neural network trained on millions of real-world images, to predict the label of each set of pixels, and then projects these labels in 3D using a technique known as ray-casting, commonly used in computer graphics for real-time rendering.

The result is a map of a robot’s environment that resembles a dense, three-dimensional mesh, where each face is color-coded as part of the objects, structures, and people within the environment.

If a robot were to rely on this mesh alone to navigate through its environment, it would be a computationally expensive and time-consuming task. So the researchers developed algorithms to construct 3D dynamic “scene graphs” from Kimera’s initial, highly dense, 3D semantic mesh. In the case of the 3D dynamic scene graphs, the associated algorithms abstract, or break down, Kimera’s detailed 3D semantic mesh into distinct semantic layers, such that a robot can “see” a scene through a particular layer, or lens. This layered representation avoids a robot having to make sense of billions of points and faces in the original 3D mesh. Within the layer of objects and people, the researchers have also been able to develop algorithms that track the movement and the shape of humans in the environment in real-time.

This is essentially enabling robots to have mental models similar to the one humans use. And it is expected to impact many applications, including self-driving cars, search and rescue, collaborative manufacturing, and domestic robots.

References

Robotics Science and Systems, July 12-16, 2020, “3D Dynamic scene graphs: Actionable spatial perception with places, objects, and humans,” by Antoni Rosinol, et al. © 2020 RSS. All rights reserved.

To view or purchase this article, please visit:

https://www.researchgate.net/publication/342881852_3D_Dynamic_Scene_Graphs_Actionable_Spatial_Perception_with_Places_Objects_and_Humans

To view a related video media, from Massachusetts Institute of Technology, please visit:

https://www.youtube.com/watch?v=SWbofjhyPzI

_아침독서상단(1).jpg)

.jpg)

.jpg)

.jpg)